Mostbet BD – Betting Company Mostbet Bangladesh

Go to the siteMostbet is known as one of the most popular bookmakers in Bangladesh, offering the benefits of safe sports betting and comfortable online casino gaming. The pre-match section includes more than 35 sports, including football, with the Bangladesh Premier League also available. Bangladeshi users can now enjoy the services of the bookmaker and virtual casino through the Mostbet website. According to the bookmaker’s data, more than 1 million users have already joined Mostbet, and the number of daily bets across the website and mobile app has exceeded 800,000.

| Bookmaker Name | Mostbet |

| Company | Bizbon N.V. |

| Founded | 2009 |

| Licence | 8048/JAZ2016-065 |

| Address | Stasinou 1, Mitsi Building 1, 1st Floor, Flat/Office 4, Plateia Eleftherias, 1060, Nicosia, Cyprus |

| Number of Sports (Pre-Match) | 35+ |

| Number of Sports (Live) | Around 20 |

| Number of Casino Products | 7,000+ |

| Payment Methods | bKash, Nagad, Rocket, Ripple, Bitcoin Cash, USDT, ETH, LTC, DOGE, BNB and other cryptocurrencies |

| Minimum Deposit | 300 BDT |

| Maximum Deposit | 50,000 BDT |

| First Deposit Bonus | Up to 125% (maximum 25,000 BDT) + 250 FS |

| No Deposit Bonus | 5 free bets on Aviator or 30 free spins on top 5 user-selected slot machines |

| Mobile App | Android, iOS |

| Website Languages | English, Bengali, Hindi |

| Support | Online chat (on site), Telegram bot, feedback form |

First Deposit Bonus and Other Promotions

Registering on the Mostbet website is a simple and quick process. By clicking the “Register” tab in the top right corner and filling in any of the forms in the pop-up window, users can complete their registration on the platform.

- One-click: The fastest method where login and password are generated automatically.

- By mobile number: Choose your preferred currency for the future account, enter your mobile number and verify it.

- By email: Create an account by providing an email address and setting a password.

- Via social networks: Link your social media account to register quickly.

- Extended method: Register by providing additional information.

No matter which registration method is chosen, users must create a personal profile and provide correct information in all required (*) fields. Additionally, to receive a welcome bonus, one of two options must be selected — a bonus for sports betting or for casino gaming.

Here is a list of the bonuses and promotions available for online sports betting with Mostbet:

- First Deposit Bonus: A bonus of up to 25,000 BDT for new users.

- Bonus Madness: Additional bonuses available on the second and third deposits.

- Mostbet Loyalty Programme: Collect coins and convert them into bonuses.

- Mostbet Birthday Offer: Free bets and free spins on your birthday.

- Express Bet Insurance: Free bet protection on express bets.

- Bet Buyback: Recover a certain part of your bet.

- Invite Friends: Earn extra bonuses through the referral programme.

- Odds Boost 40%: Increased odds for express bets.

- Express Booster: Extra perks for express bet enthusiasts.

- Lucky Loser: Special bonus after 10 consecutive losing bets.

- Risk-Free Promo: Opportunity to place risk-free bets on selected scores.

- Friday Offer: Special bonus on Friday deposits.

New users will receive a 100% welcome bonus on their first deposit, up to a maximum of 25,000 BDT. If the deposit is made within the first 15 minutes of registration, the bonus will increase to 125%. Additionally, players have the chance to earn five free bets for the popular Aviator game at the Mostbet casino.

For the first deposit, users must place accumulator bets with at least three events and odds of 1.40 or higher, totalling five times the deposit amount. If a user places at least one bet each day during a week, they will receive a special bonus on their first deposit made on a Friday. The same wagering condition (x3) applies to the welcome bonus.

Mostbet offers a loyalty programme for regular users. Players can earn special points (coins), which they can later exchange for bonuses in sports betting or casino gaming. These coins can be used as an alternative to deposits and are also given as rewards for completing special quizzes.

By completing special tasks in the sportsbook and casino sections, players can increase their status and earn coins, free bets, and free spins. The higher the status, the more complex the tasks become — but the conversion rate of coins into bonuses also increases.

What Can You Bet on at Mostbet in Bangladesh?

Cricket and football have long been the most popular sports in Bangladesh. In recent years, kabaddi has also gained significant popularity. In addition to these popular sports, Bangladeshi players can place bets on horse racing in both pre-match and live formats on the Mostbet website.

Live Betting at Mostbet

Compared to the pre-match line, the Mostbet live line offers a wider range of events and different types of bets. This is because some matches in the sportsbook are available exclusively in live mode. Mostbet allows Bangladeshi players to preview live video events along with updated key statistics as the game progresses. Additionally, the match is shown in graphical form via a match tracker, highlighting critical elements such as dangerous attacks, fouls, and other key data.

Football Betting

Mostbet Bangladesh includes top events and tournament listings in its football betting line for various Asian championships, including Bangladesh and India. In the live betting section, users can access not only major leagues but also lower division matches. Bangladeshi users can place bets on both men’s and women’s championship games. One of the strongest teams in the country, “Bashundhara Kings”, is popular not only in Bangladesh but also among European players.

Cricket Betting

Due to historical ties between Bangladesh, India, and Great Britain, cricket enjoys widespread popularity in these countries. The Bangladesh national team regularly participates in world championships and other prestigious tournaments, making cricket one of the country's leading sports. Mostbet provides betting options for major cricket tournaments, including Bangladeshi championships and national team matches. This line includes not only tournament winners, total runs, and handicaps, but also player statistics, total scores, top bowlers, and a variety of other betting markets.

Kabaddi Betting

Kabaddi is an ancient sport that is especially popular in Bangladesh, India, and Pakistan. The game combines offence and defence, where a player must tag an opponent and return to their base without being caught. In recent years, kabaddi has attracted even more audience attention, leading to its inclusion in sportsbook lines. Mostbet offers betting opportunities on professional tournaments, including both local and international kabaddi championships. Betting options here include wagers on the winner, total points, and handicap markets.

Horse Racing Betting

Horse racing is a prestigious form of entertainment that has historically been extremely popular in countries influenced by Great Britain. In Bangladesh, horse racing and horse race betting are equally popular. Mostbet’s sportsbook includes events from top racetracks, offering players a wide variety of betting options. These include bets on the race winner, top three finishers, performance comparisons between horses, and other popular markets. For certain racetracks, live broadcasts are also available, allowing races to be watched in real time right after they begin.

Casino and Aviator at Mostbet Bangladesh

Mostbet offers not only sports betting but also online casino services. The official website provides access to various casino sections:

- Casino: Slot machines and slot games

- Live Casino: Games with live dealers

- Aviator: Popular crash game

- Bonus Buy: Games with the option to purchase bonuses

- Poker: Poker tables and tournaments

Mostbet Aviator is one of the most popular games among users. Developed by Georgian provider Spribe, this game introduced the concept of crash games in online casinos. In Aviator, players place bets on an aircraft. As the aircraft ascends, the odds increase. However, the plane can disappear from the radar at any moment. If the aircraft flies away or crashes, the player loses their entire bet. While the plane is still on screen, players can click a button or set an automatic cash-out based on a predefined multiplier to withdraw their winnings. Various strategies can be applied in Aviator, but players should remember that this game operates using a Random Number Generator (RNG), and all rules are subject to terms and conditions.

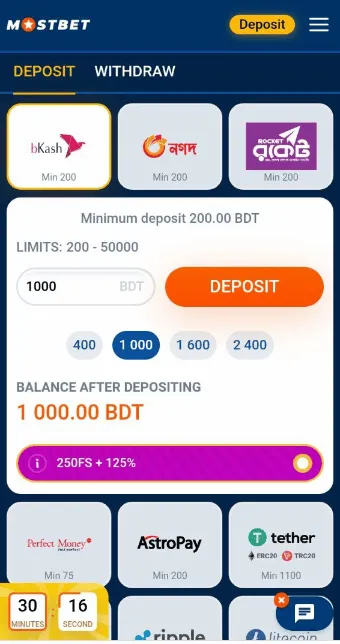

Re-Deposit Methods

The available re-deposit methods at Mostbet are as follows:

- bKash (Minimum – 200 BDT)

- Nagad (200 BDT)

- Rocket (200 BDT)

- Perfect Money (75 BDT)

- AstroPay (200 BDT)

- Tether (USDT) (1,100 BDT)

- Ethereum (5,500 BDT)

- Ripple (1,300 BDT)

- Litecoin (600 BDT)

- Bitcoin Cash (600 BDT)

- Binance Coin (BNB) (800 BDT)

- Dogecoin (900 BDT)

- Dash (800 BDT)

- Zcash (1,700 BDT)

- Bitcoin (3,000 BDT)

Withdrawal Methods

To withdraw funds from the Mostbet website, all required fields marked with an asterisk in the profile must be filled in with accurate personal information. Once this condition is met, the following methods can be used to make a withdrawal:

- bKash, Nagad, Rocket payment systems

- AstroPay electronic wallet

- Tether and Bitcoin cryptocurrencies

The exact details of the bank account used for the initial deposit must be provided for the withdrawal process. To make the withdrawal process smoother, we recommend making at least one deposit using the same method before attempting a withdrawal.

Mobile Version and Application of the Mostbet Website

| Type | Android | iOS |

| Cost | Free | Free |

| Download | Official website | Official website, App Store |

| OS | 5.0 and above | 11.0 and above |

| Installer file size | Approx. 25 MB | – |

| After installation size | Approx. 100 MB | Approx. 250 MB |

| Supported devices | Smartphone, Tablet | iPhone and iPad |

| User interface | Optimised for various screen sizes | Special iOS-optimised version |

| RAM | 1 GB | 1 GB |

| Processor | 1.2 GHz dual-core | Apple A7 |

Mostbet provides all the necessary tools for users to easily play on mobile devices:

- Mobile app for Android

- Programme for iOS

- Mobile version of the website

The functionality of the mobile website and app is similar to the desktop version, although each version offers some unique features. The app provides the fastest access, so if it is installed on a mobile phone, it loads significantly quicker than the website.

The iOS app for Mostbet can be downloaded from the App Store. However, the Android version is not available on Google Play, as Google’s policy prohibits the advertisement of official casinos and bookmakers. Therefore, the Mostbet app for Android can only be downloaded from the company’s official website or from selected official resources of certain smartphone manufacturers.

In some countries, offshore sportsbooks are illegal, and internet service providers may block such platforms. However, by using the Mostbet mobile app, this blocking can be easily bypassed. The app does not have a fixed permanent web address and can operate using anonymous sources, making it difficult for providers to restrict access.

If a user regularly uses Mostbet's services (betting and casino), it is recommended to continue playing through the app. The app can also be used to watch live events. For this, registration must be completed, and any amount of money must be deposited. The mobile version of the website also allows users to place bets, play casino games, and watch live broadcasts of events. It can be effectively used as an alternative to the app.

Support Service

Mostbet provides support to users through various communication channels. Players can contact the support team via the following methods:

- Contact form with administrator: Available in the “Contacts” section at the bottom of the site

- Live chat: Available in the bottom right corner of the website

- Email: [email protected]

- Telegram chat: Link available in the “Contacts” section

Replies in live chat are usually provided within 3–5 minutes. Customer support for Bangladeshi users is offered in English. If a user fills in the contact form, a reply will be sent to the email address used during registration, and all further communication will be conducted via email.

Advantages and Disadvantages of Mostbet BD com

Advantages:

- Excellent range of events and games

- Popular sports in Bangladesh

- Deposit bonus up to 25,000 BDT

- Account registration in BDT

- Convenient payment methods for Bangladeshi users

- High betting limits

- Event broadcasts available on the website

- Mobile apps for iOS and Android

Disadvantages:

- Offshore (foreign) licence

- Verification process can take a long time

FAQ

Is it safe to play on Mostbet?

Yes, Mostbet is completely safe. The company is licensed by Curaçao, follows fair play regulations, and guarantees transparent payouts. It is also quite popular among Bangladeshi players.

How can I get a no deposit bonus?

To receive a no deposit bonus in the form of free spins or free bets, you need to create an account via the Mostbet website or mobile app.

What is the minimum deposit amount for Bangladeshi players?

The minimum deposit is 300 BDT.

Where can I find the Mostbet mirror link?

The safest way is to request a working and up-to-date mirror link directly from customer support.

Can I deposit using cryptocurrency?

Yes, Mostbet accepts over 10 popular cryptocurrencies for payments, including ETH, BNB, DOGE, LTC, and USDT.

Is verification mandatory?

Yes, account verification is required before making your first withdrawal on Mostbet.

Rating ⭐️⭐️⭐️⭐️